Deepfakes Are Now a Strategic Risk: What CTOs Must Do to Protect Their Organizations

By Rui Wang, CTO, AgentWeb

Why Deepfakes Are Now a Strategic Risk—and What CTOs Must Do About It

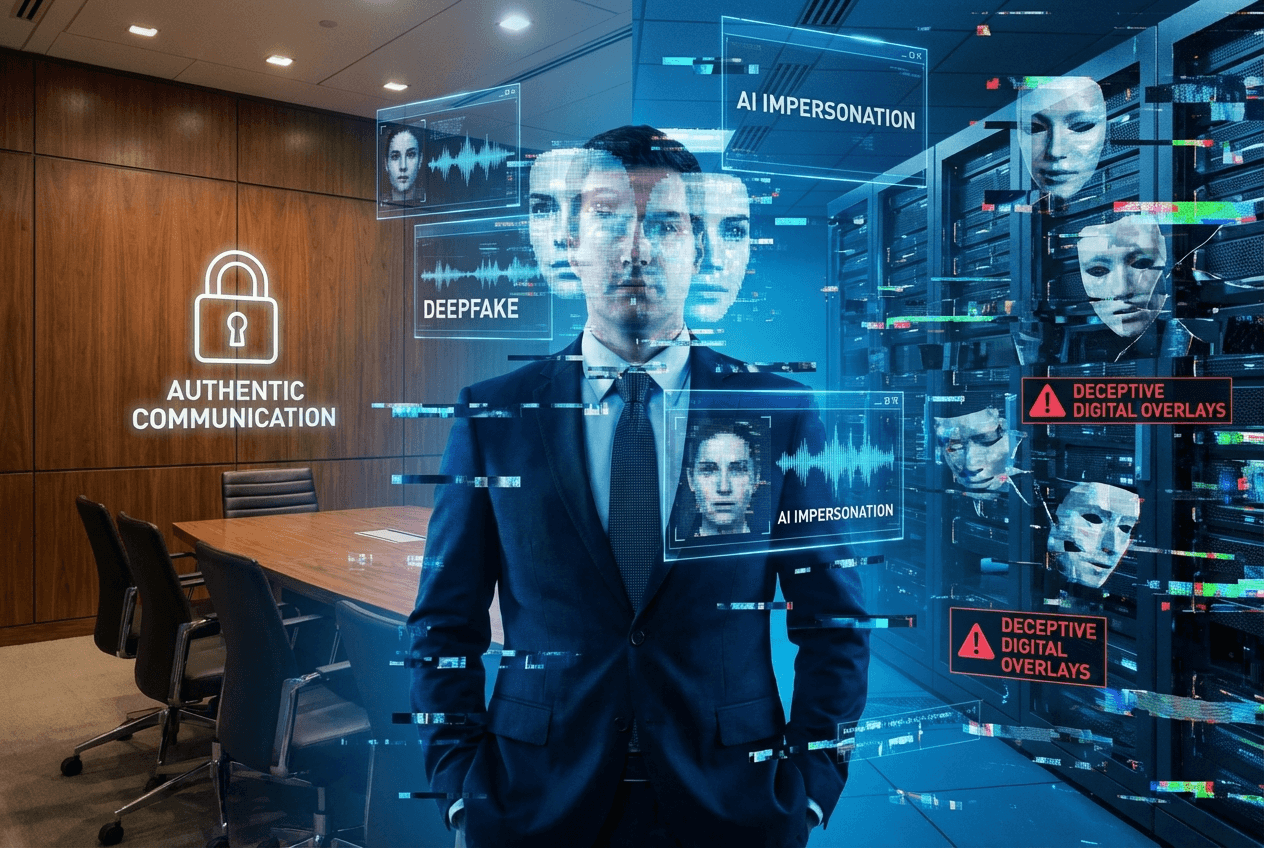

The Deepfake Threat: More Than Just a PR Nightmare

It’s tempting to see deepfakes as a niche issue—a problem mostly for celebrities, politicians, or public figures. But the truth is, deepfakes have quickly evolved beyond mere internet curiosities. Today’s AI-driven impersonations can shake entire businesses, undermine trust in digital communications, and expose critical vulnerabilities. For CTOs, especially those in fast-moving startups, deepfakes are no longer a speculative risk; they are a strategic one.

The Foreign Affairs article “How Deepfakes Could Lead to Doomsday” highlights one uncomfortable reality: we’ve entered an era where synthetic media can trigger international incidents, manipulate markets, and damage reputations overnight. For technology leaders, this means taking proactive steps—not just reacting after the fact.

How Deepfakes Became an Enterprise Security Risk

The New Face of Corporate Espionage

Cybersecurity used to be about firewalls, passwords, and encryption. Today, it’s also about defending corporate identity itself. Deepfakes—hyper-realistic synthetic videos, audio, or images generated by AI agents—are increasingly being weaponized. Consider the following scenarios:

- Impersonation of Executives: Imagine a convincing video of your CEO announcing a merger that isn’t happening. Before you can issue a correction, stock prices could tumble and competitors might exploit your moment of chaos.

- Spear Phishing with Deepfake Audio: Attackers can now mimic trusted voices on a conference call, instructing teams to transfer funds or share sensitive data.

- Fake News, Real Consequences: A viral deepfake video can undermine partnerships, erode shareholder confidence, or even invite regulatory scrutiny.

Deepfakes multiply the impact of traditional security risks. It’s not just about data leaks—now, misinformation itself can be weaponized against your business, often with plausible deniability for attackers.

Understanding the Role of Agentic AI

Why Autonomous AI Agents Magnify the Risk

The rise of agentic AI—autonomous systems capable of complex, goal-directed actions—makes deepfake threats even harder to track. Modern AI agents can:

- Identify high-value targets from public data

- Generate convincing synthetic media tailored for those targets

- Amplify and distribute deepfakes across multiple channels

These capabilities mean deepfakes can be deployed at scale, with minimal human oversight. The risk is no longer just about a rogue actor making a video in their bedroom. We’re facing coordinated AI-driven campaigns that can manipulate, deceive, and disrupt at speeds humans alone can’t match.

The Strategic Risks for Startups and Enterprises

Why CTOs Must Act Now

Startups are particularly vulnerable. Fast growth often means less mature security policies, more reliance on remote communications, and fewer resources to respond to rapidly evolving threats. Deepfake attacks can:

- Damage brand reputation before you’ve even built it

- Jeopardize investor confidence during critical funding rounds

- Trigger legal liabilities if customers, partners, or regulators are misled

For larger enterprises, the risks are just as severe—only the stakes are even higher. Deepfake incidents can trigger market shocks, regulatory investigations, or permanent loss of public trust.

Practical Steps CTOs Must Take—Right Now

1. Build Deepfake Awareness Across Your Organization

The first line of defense is awareness. Most employees don’t know what deepfakes are, let alone how to spot them. CTOs should:

- Organize regular training sessions on deepfake risks and detection

- Share examples of recent attacks and red flags to watch for

- Encourage a culture of healthy skepticism about digital communications

2. Implement Human-in-the-Loop Verification

No technology is foolproof, but combining AI with human oversight is essential. Human-in-the-loop systems can:

- Flag suspicious media for manual review before major decisions are made

- Require multi-factor authentication for sensitive transactions (not just voice or video verification)

- Set up escalation protocols for unusual requests—even if they seem to come from trusted voices

When an AI agent detects a possible deepfake, humans need to be involved in validating authenticity, especially for high-stakes communications.

3. Invest in Deepfake Detection Tools

AI risk mitigation must include investment in robust detection technologies. The market now offers:

- Real-time video and audio analysis tools that flag synthetic media

- Forensic platforms that can trace the origins of viral content

- Blockchain-based watermarking systems to prove the authenticity of critical communications

CTOs should evaluate these tools not just for IT teams, but for communication, marketing, investor relations, and legal teams.

4. Prepare Incident Response Playbooks

If—and when—a deepfake incident occurs, speed and coordination are vital. CTOs should:

- Develop clear protocols for responding to suspected deepfake attacks

- Identify key internal and external stakeholders (legal, PR, regulators)

- Set up rapid-response teams that can investigate, contain, and communicate about incidents

Having a playbook isn’t optional; it’s a core part of AI risk management in today’s threat landscape.

5. Collaborate on Industry Standards

No organization can fight deepfakes alone. CTOs should:

- Join industry consortia focused on deepfake detection and response standards

- Advocate for clearer regulations and best practices

- Collaborate with peers to share threat intelligence and response strategies

Agentic AI Safeguards: What Really Works?

Building Resilient Agentic AI Systems

As CTO at AgentWeb, I’ve seen firsthand how agentic AI can be both a risk and an asset. The key is designing agents with built-in safeguards. This includes:

- Limiting access to sensitive data and systems

- Implementing ethical guardrails to prevent misuse

- Using explainable AI models so humans can understand, audit, and challenge decisions

A human-in-the-loop approach remains the gold standard. Autonomous agents should augment, not replace, human judgment—particularly when authentication, financial, or reputational stakes are high.

Practical Implications for Startups

Startups should prioritize:

- Early adoption of deepfake resilience policies—even before scaling up

- Partnering with trusted vendors for deepfake detection and media authentication

- Building a security-first culture where every employee knows what’s at stake

Looking Ahead: The Role of CTOs in an AI-Driven World

CTOs are on the frontlines of the deepfake battle. The business implications go far beyond IT—touching brand, legal, investor, and regulatory domains. By treating deepfakes and AI risk as strategic priorities (not just technical ones), technology leaders can safeguard their organizations against the next wave of digital deception.

Final Thoughts

Deepfakes aren’t just a technical problem—they’re a strategic risk. Combining agentic AI safeguards, human-in-the-loop verification, and proactive organizational policies is no longer optional. It’s the price of doing business in the age of synthetic media. For startups and enterprises alike, the time to act is now.

Author: Rui Wang, PhD (CTO at AgentWeb)